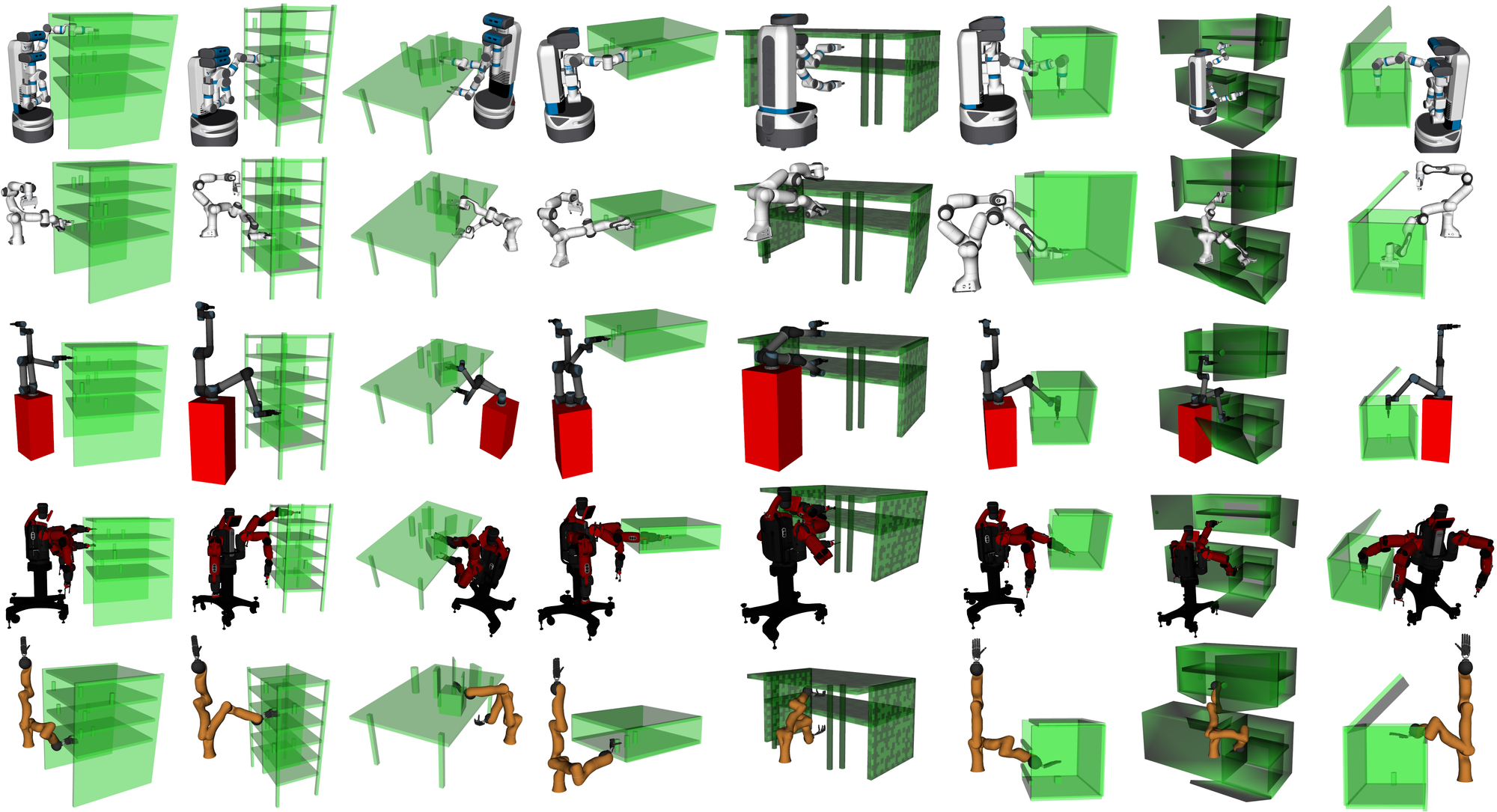

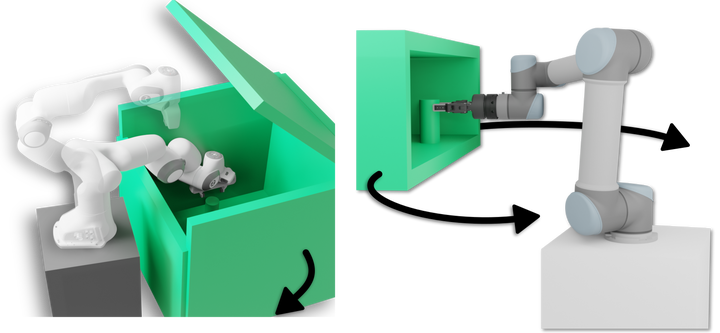

Examples of environments and robots in our pre-generated dataset of motion planning problems. The black arrows show the direction of perturbation that can be made to the nominal scenes to create new problems by for example sampling the relative orientation of the robot.

Examples of environments and robots in our pre-generated dataset of motion planning problems. The black arrows show the direction of perturbation that can be made to the nominal scenes to create new problems by for example sampling the relative orientation of the robot.

Evaluating motion planning algorithms for high degree-of-freedom robots (i.e., manipulators) can be challenging and time consuming, prone to bias and hard to compare against state-of-the-art planners. Additionally, with the advent of learning methods in planning for robotics, it is paramount to have the ability to generate datasets of meaningful problems to train and evaluate new models. In this paper we introduce MotionBenchMaker, an open source tool to generate datasets for bechmarking and training for realistic robot manipulation problems. Our tool is designed to be easy to extend to support new problems and robots and comes with tools to easily perform motion planning benchmarking.

Features of MotionBenchMaker

These are some of the features that MotionBenchMaker offers:

- It is written on C++

- It supports ROS and MoveIt making it easy to integrate into existing robotics implementations

- Configuration of new environments and robots is done through standard formats such as yaml, urdf, srd files.

- It comes with sampling-based planners from OMPL such as RRT-Connect, PRM, KPIECE, etc.

- It provides tools to easily specify environment transformations to automatically generate interesting motion planning problems, such as problem generator and scene sampler.

- It comes with prefrabicated datasets that comprise 5 commonly used robots and 8 environments that are actively being used by the community to assess the performance of new motion planning algorithms.

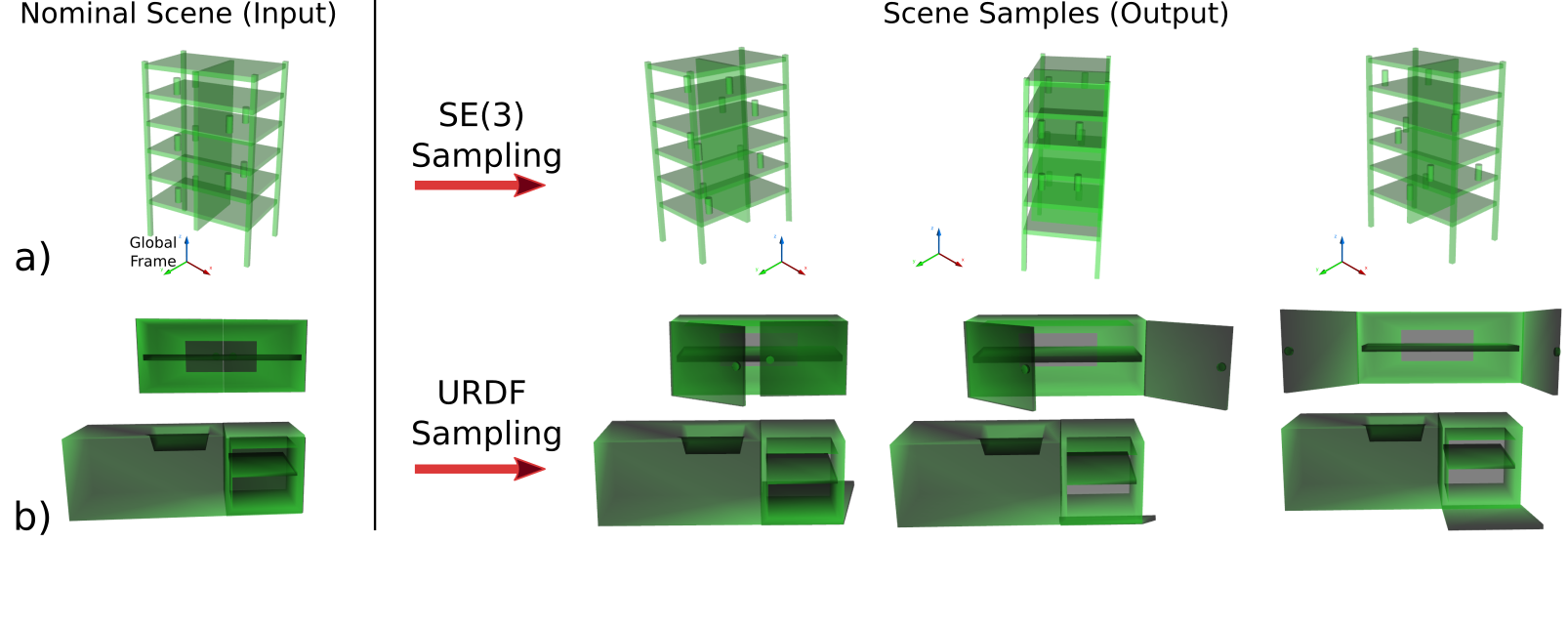

Scene Sampler and Problem Generator

The main functionality of MotionBenchMaker is its ability to create new problems by 1) easily specifying (random) transformations to objects from a nominal scene to generate distinct problems from a similar domain and 2) automating the way to specify motion planning problems (start/goal configurations) for manipulation problems by describing relationships between robot end-effectors and objects in the scene. The first component is achieved by a scene sampler which can perform SE(3) sampling to objects in the scene as well as URDF sampling as shown in the figure below:

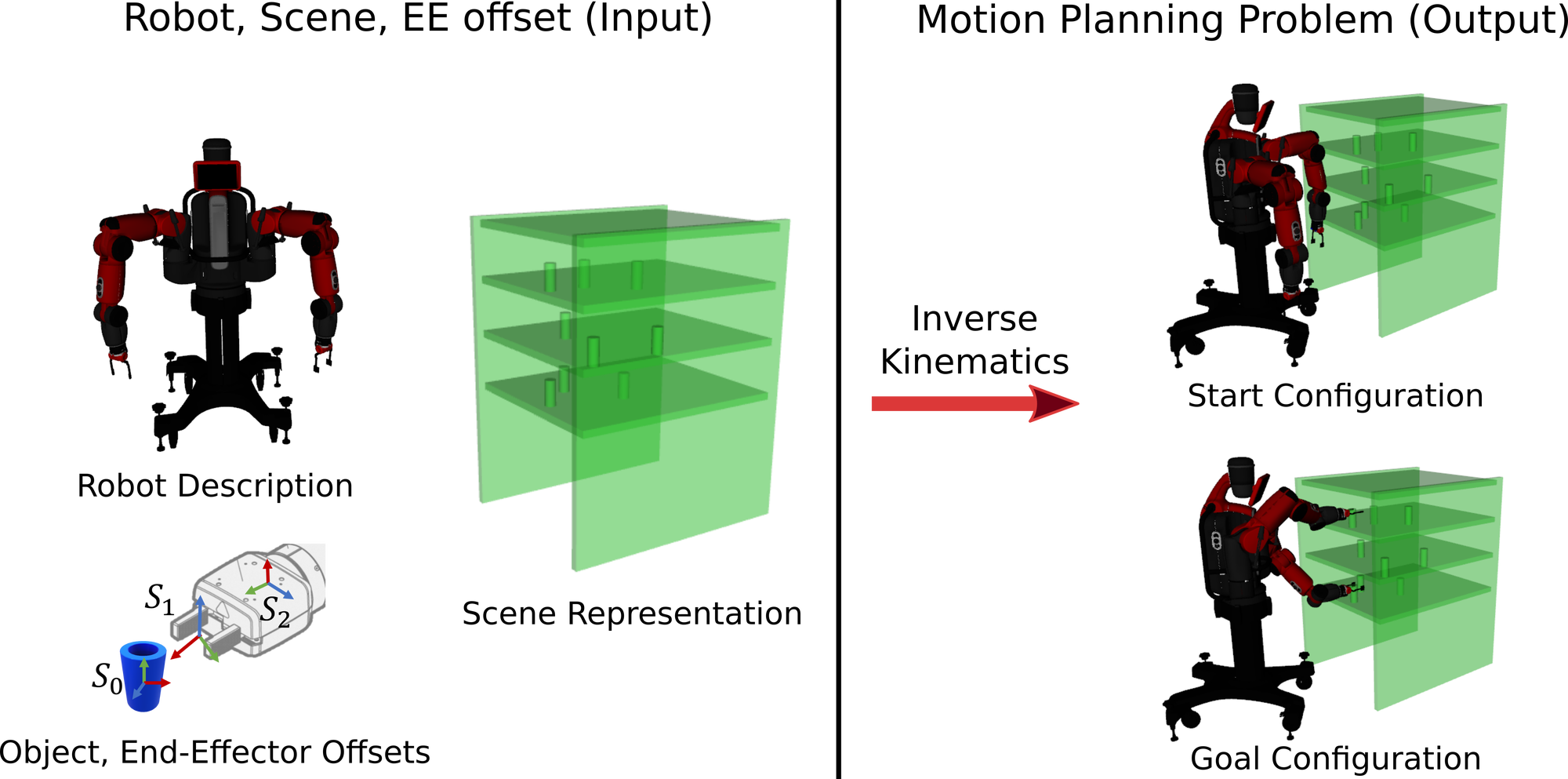

The Problem Generator takes as inputs a robot description (URDF), a geometric scene and object-centric end-effector poses and create a motion planning problem (start and goal configurations in the form of a MoveIt! request). Additional transformations to specify the robot base pose can be specified. The figure below shows examples.

Prefabricated dataset

MotionBenchMaker comes with a set of prefabricated problems that are interesting motion planning queries with commonly used robots.